Hello Codeforces!

The recent Educational Round 52 featured problem 1065E - Side Transmutations, which is a counting problem that most people solved using some ad-hoc observations. However, like many problems of the form "count number of objects up to some symmetry/transformation", it can be solved using a neat little theorem called "Burnside's Lemma", which I would like to introduce to those who do not know it yet using the recent task as an example (even though it is completely overkill for this problem).

This is my first tutorial, so any feedback is greatly appreciated.

Prerequisites

Definition of a group

Group actions

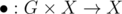

In these kinds of counting problems, you always have some set of objects X you are working with and some set of operations G you can use to transform one object into another. Formally, we have a function  so that

so that  is the object we get when applying operation g to object x.

is the object we get when applying operation g to object x.

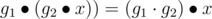

If the operations form a group, and the operations fulfill two rules,  and

and  , then we call

, then we call  a "group action". The rules basically say that the action is compatible with the group structure and are usually easy to verify.

a "group action". The rules basically say that the action is compatible with the group structure and are usually easy to verify.

Orbits and fixed points

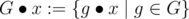

For some  , we can look at its orbit

, we can look at its orbit  : The set of all objects which can be attained by doing some transformation on x. We immediately see why this is a useful notion for us: Orbits are exactly the classes of objects which we consider equal, because they only differ by some symmetry or other transformation. Our goal is to count the number of orbits, which is sometimes denoted by |X / G|.

: The set of all objects which can be attained by doing some transformation on x. We immediately see why this is a useful notion for us: Orbits are exactly the classes of objects which we consider equal, because they only differ by some symmetry or other transformation. Our goal is to count the number of orbits, which is sometimes denoted by |X / G|.

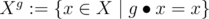

For some  , we may also look at its fixed points

, we may also look at its fixed points  : The set of objects which are not changed by the operation g. We'll see in a minute why we need this notation.

: The set of objects which are not changed by the operation g. We'll see in a minute why we need this notation.

Our task

In our task, the objects are all strings of length n over the alphabet A. The operations are slightly more difficult. We cannot just take all single moves as described in the task as our operations, because they do not form a group: It is not possible to combine two moves into another (single) move.

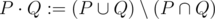

However, note that the order in which moves are made is not significant. Also, doing a move twice is exactly the same as not doing it at all. Therefore, an operation can be seen as a subset of all allowed moves, applied in some arbitrary order. So we choose G as the set of subsets (the powerset!) of B = {b1, ..., bm}. We also need a group structure on G, and the correct way to get it is this:  . Think of this as the element-wise XOR of the two sets (remember, P and Q are subsets of B). We need XOR here because of our observation that doing a move twice is the same as not doing it at all.

. Think of this as the element-wise XOR of the two sets (remember, P and Q are subsets of B). We need XOR here because of our observation that doing a move twice is the same as not doing it at all.

Let's have a look at the orbits and fixed points of this group action. The orbits are exactly the sets of strings which can be transformed into each other by some sequence of moves. Perfect, that is exactly what we wanted.

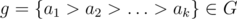

Now consider some element  . How many letters are swapped by this transformation? Move a1 swaps a1 pairs of letters. Then, move a2 swaps a2 of these pairs back, so after a1 and a2, a1 - a2 pairs of letters have been swapped. Then, move a3 swaps a3 more pairs, which have all been swapped back in the last step, so a1 - a2 + a3 pairs have moved, and so on. So after all moves, the number of pairs of letters which have (effectively) been swapped is

. How many letters are swapped by this transformation? Move a1 swaps a1 pairs of letters. Then, move a2 swaps a2 of these pairs back, so after a1 and a2, a1 - a2 pairs of letters have been swapped. Then, move a3 swaps a3 more pairs, which have all been swapped back in the last step, so a1 - a2 + a3 pairs have moved, and so on. So after all moves, the number of pairs of letters which have (effectively) been swapped is  . So how does a string look that is a fixed point of this transformation? For each pair of swapped letters, we can only choose one, because the other has to be the same for the operation not to change the string. So we end up with |A|n - l choices for the string and conclude |Xg| = |A|n - l.

. So how does a string look that is a fixed point of this transformation? For each pair of swapped letters, we can only choose one, because the other has to be the same for the operation not to change the string. So we end up with |A|n - l choices for the string and conclude |Xg| = |A|n - l.

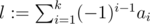

Burnside's lemma

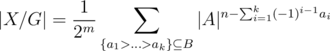

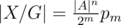

Now that all preparations are done, Burnside's lemma gives a straight-up formula for the answer of the problem:

That's it! Once you understand how many fixed points there are for each operation, you can use the formula to get the number of orbits.

This is nice in multiple ways. First, since you only look at the fixed points for a single operation at a time, finding the fixed points is often not very hard, as we saw above. Second, applying Burnside's lemma generally requires almost no ad-hoc thinking: You simply define the group and the action, look at the fixed points, and arrive at the solution without the need for any clever insight, but just by following the plan. Because of these reasons, many counting problems become significantly easier when applying Burnside's lemma.

Solving our problem

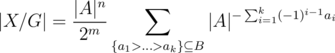

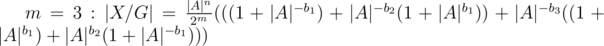

Since we have already looked at the fixed points of our action, we can just plug it into the formula to get the solution:

Implementing the formula in the straightforward way using bitmasks to enumerate subsets gives correct answers. Most problems where Burnside's lemma is needed are solved at this point.

Making it fast

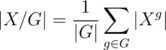

However in this problem, we have m = 105, but the bitmask implementation takes time exponential in m, which is clearly too slow. We need to find some way to evaluate the formula faster. First move |A|n out of the sum to get

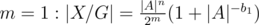

Let's have a look at how this sum looks for some small values of m:

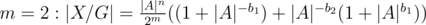

We can use this pattern to evaluate the sum in linear time. Let p0 = r0 = 1. pi contains the above sum without the leading factor considering only {b1, ..., bi}. ri is the same, but for each summand we use its multiplicative inverse. From the above pattern we deduce:

and finally,  , which we can easily calculate in linear time to get AC.

, which we can easily calculate in linear time to get AC.

Practice

You can test your newly acquired skills by solving 101873B - Buildings or any of the problems listed here.

Auto comment: topic has been updated by TwoFx (previous revision, new revision, compare).

Auto comment: topic has been updated by TwoFx (previous revision, new revision, compare).

Auto comment: topic has been updated by TwoFx (previous revision, new revision, compare).

what do you mean by g1.(g2.x) = (g1.g2).x ,need ur help here?

G is a group, therefore it has an operation.

$$$g_1 \bullet (g_2 \bullet x)$$$ is "apply $$$g_1$$$ on the result of applying $$$g_2$$$ on x"

$$$(g_1.g_2)\bullet x$$$ is "use the group operation on $$$g_1$$$ and $$$g_2$$$ and apply that on x"

Does order matter for the g1 and g2 or we can choose in anyway we want to operate on x,?

It matters. In some specific problem it may not matter but in general it does.

An example where it matters is rotating a face of a rubix cube. Rotating the right and top face result in a different configuration than rotating top and right.

Thnxx