This is the Chinese national training team report papers translated into English using several computer tools.

I find this to be a much way to read these PDF papers than Google Translate or Foxit Reader Translate (despite the limitations -- see below), so I think it may be useful to other people too.

Original papers download:

- https://github.com/OI-wiki/libs/tree/master/集训队历年论文

- https://github.com/tshu-w/ICPC/tree/master/references/国家集训队论文

- http://download.csdn.net/album/detail/657/1/1

- https://github.com/enkerewpo/OI-Public-Library

Auto-translated papers download:

I've only translated some topics, but I will upload more in the future.

Update:

Better method is used to translate PDF (some PDF uploaded).

- I uploaded the method and programs used to translate those PDFs (previously, the general method is already explained in the remark section).

- I uploaded all the translated LaTeX sources and compiled PDF files of 2020 I've done so far (currently only topic 1 at

2020/1-translated.pdf-- note that the PDF preview feature on the site might not work!; however the source code, programs and methods are all available, anyone can translate and upload them) There are some commercial tools for converting PDF back to LaTeX source (Mathpix for example), however they must be paid for and I don't know what the quality would be for Chinese. It would be easier to get the source code.

I'll update all the files if I find some better way to translate those.

I could not find any existing post that does the same thing, despite a lot of blog posts that requests it: 1 2 3 and I find it really hard to copy and paste each line into a translator program (or select each line), and translating the whole thing with Google Translate (or similar) will remove the figures/formulas, so the side-by-side comparison was helpful.

Issues/possible improvements/contributions:

It's really hard to find a good site/program to translate PDF files. Does anyone know one better than this one?

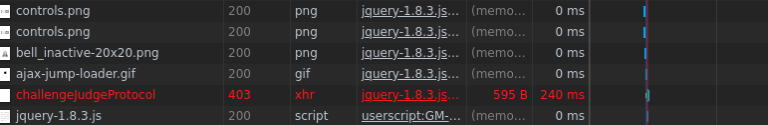

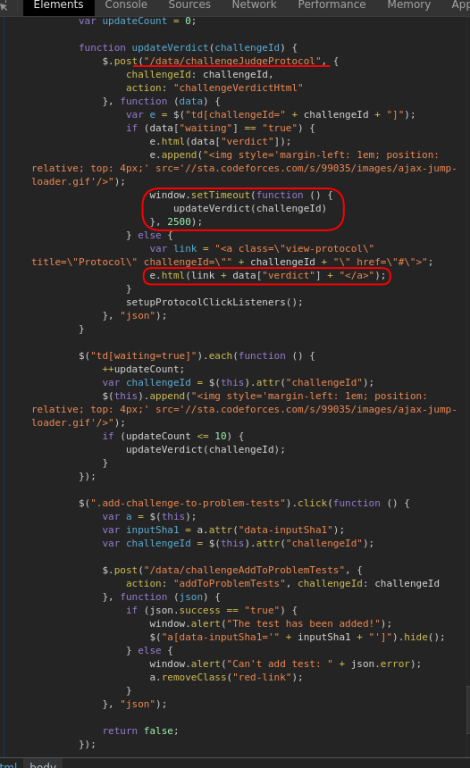

The one I'm using fails badly sometimes, stretches or shrinks the text. Sample page (low resolution version). However, it's still better than the alternatives (Foxit Reader Translate, Google document translate), which requires highlighting/copying each sentence, scrolling two windows parallelly, and/or overflows the page width so horizontal scrolling is required.

I suppose that the original Chinese characters are still preserved inside the PDF; however direct copy and paste results in corrupted data.

If anyone can figure out how to extract the Chinese characters without OCR, that would improve the translation quality (because currently the OCR is not perfect, and there are some errors).

(some metadata in a PDF shows that it was made with Microsoft Word 2013 and/or Acrobat 11.0.0)

The images, math formulas and pseudo code listings are not preserved.

This is a limitation of ABBYY OCR tool. Although it can be fixed manually, I'm not going to do that.

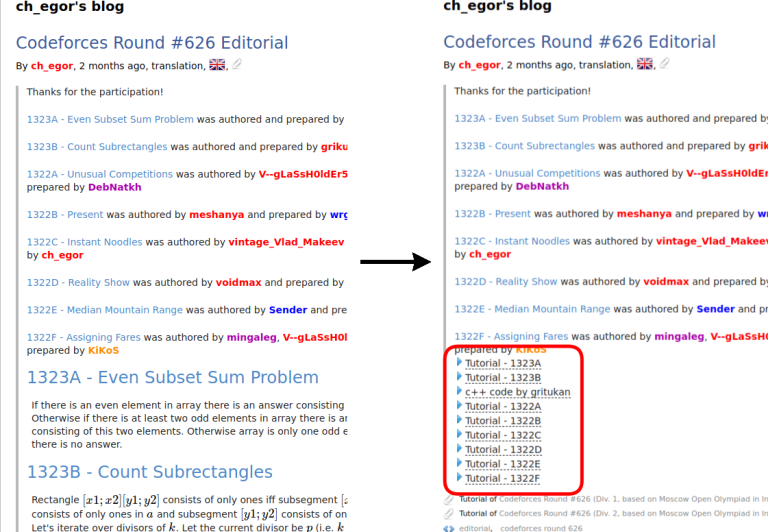

You can also write (usually English; however Chinese HTML is still easier to translate than Chinese PDF) blog posts to explain the techniques.

- Or find existing content (in English) that describes those techniques.

)

)