Update 2 — Thanks adamant for his Subset convolution interpretation blog. It does simplifies a lot of things.

Update 1 -

Let's consider this problem -

We have a function subset_convolution(A,B) it gives us Subset Sum Convolution of two sets $$$A, B$$$.

Let's say we array $$$A$$$ with $$$A[0]=1$$$ and we want to find A^K defined as $$$A$$$ convoluted with itself $$$K$$$ times.A^2 = subset_convolution(A,A)A^3 = subset_convolution(A^2,A)

...A^k = subset_convolution(A^{k-1},A)

The best I can think right now is using something on lines of fast exponential to achieve $$$O(log K*N^2*2^N)$$$ time where $$$2^N$$$ is the size of A. Also, there exist some solutions $$$O(N^3*2^N)$$$ using the fact that the majority of the multiplications will be with A[0] and permutations & combinations.

But it can be done in $$$O(N^2*2^N)$$$ using tricks well known at some places. Idk why it works tho.

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

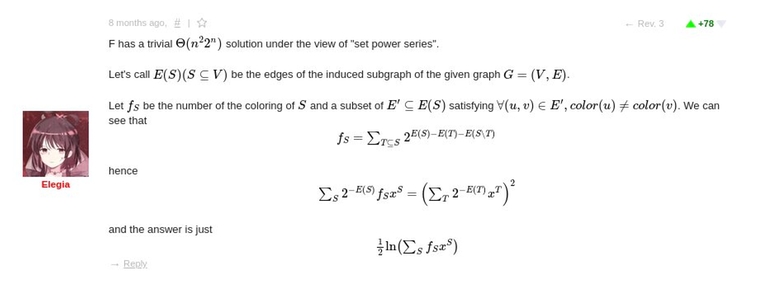

TLDR — Just me rambling about the old ARC problem I was solving for the last 3 days. Something "set power series" similar to "power series for polynomials" I came across and I have an iota idea about it right now. Also amplifying some unanswered bugaboo related to it in the discussion of that ARC round. Spoiler is just very very briefly mention different solutions I had for ARC105F problem.

The logarithm of 'set power series' is a function $$$g(x)=\sum_{S} g_{S} x^{S}$$$ such that $$$f(x) = \sum_{S} (\sum_{\mathcal{P}} \prod_{P_i \in \mathcal{P}} g_{P_i}) x^{S}$$$, where the inner summation goes over all unordered partitions $$$P$$$ of $$$S$$$.

dario2994 submission on the same problem implements a small library for subsets with functions namely and/or/xor/subset convolutions along with log and exp of set power series.

(First to answer dario2994 comment — Yes, there does exist a clever way (and maroonrk's submission) to compute subset convolution.)

I did asked some of my friends and seniors doing MATH major — where can I read formal definitions of "set power series" since googling it just gave me useless results and none of them ever heard it.

Q1 — What exactly is the definition of exp of set power series? (One implemented by dario2994 and mentioned by mmaxio). Where can I read about more similar definitions?

mmaxio mentions Lu Kaifeng's report, googling about his report along with keywords such as "set power series" doesn't yield helpful results. But then I came across this blog. It has a code snippet and mentions For details, please refer to the 2015 Proceedings of the National Training Team Lu Kaifeng, "The Properties and Application of Set Power Series and Its Fast Algorithm".

It's a 17 page report published in 2015 in Chinese, I wasn't able to find an English version. Hence must be a well-known problem in China and hence a "trivial solution" by Elegia. I tried auto translating it using the method mentioned by z4120 in Auto-translated Chinese national IOI training team report papers but I messed up a step somewhere and ended up with just Chinese translated into Chinese, would really appreciate it if someone can translate it for me.

Q2 — mmaxio's comment looks like you can apply any function to the set power series by applying it to a "ranked" vector for each mask independently makes me much more interested in this. It opens the door to a hell of a lot of possibilities here (a reason for this blog here) integration, derivative, inverse, square root to name some among similar operations on polynomials and series.

I do remember the day when I learnt about the inverse and square root of a polynomial and was overwhelmed by it. On that day, I just concluded that "polynomials are just numbers". Later I have seen some problems which even used dp states as polynomials instead of integers. The observation "polynomials are just numbers" makes it easy for me to understand those editorials and those problems do excite me these days. Something similar for sets will for sure generalize it further.

Publishing this blog with the expectation that I would find more material to read about it since Google isn't my friend here.

Update 1 (solution) —

The (non-rigorous) conclusion I came to answer my question(why does/could it work for every function) was: essentially all the functions we usually use in these kind of problems can be given by their Taylor series. So stuff like exp, ln, square root, etc. is just a linear combination of the polynomial powers, hence if we can do multiplication we can do anything. Not very convincing, I know, but it was good enough for me.

Q1: Generally, exponent of $$$A$$$ is defined as

\begin{equation} \exp A = 1 + A + \frac{A^2}{2} + \dots + \frac{A^k}{k!} + \dots \end{equation}

In our case, if $$$A = \sum\limits_{i=0}^{2^n-1} a_i x^i$$$ is a formal polynomial where $$$x^i x^j$$$ is equal to $$$x^{i|j}$$$ if $$$i$$$&$$$j=0$$$ and $$$0$$$ otherwise, the exponent can be interpreted as

\begin{equation} \exp A = e^{a_0}\left[x^0+\sum\limits_{i=1}^{2^n-1} \left(\sum\limits_{i_1 | i_2 | \dots | i_k = i,\newline 0<i_1<i_2 <\dots<i_k,\newline \forall a, b : i_a \text{&} i_b = 0} a_{i_1} a_{i_2} \dots a_{i_k}\right) x^i\right] \end{equation}

So basically coefficient near $$$x^i$$$ in the exponent is the sum of products across all possible unordered disjunctive partitions of $$$i$$$.

Q2: "ranked" vector is basically the value-representation of groupoid ring element in the same way as the result of DFT are the values in certain points, ranked vectors can be interpreted as "polynomial over $$$\varepsilon$$$" values in points of {$$$0,\varepsilon$$$}$$$^n$$$ cube. So, to calculate $$$\exp A(x) \pmod{x^n-1}$$$ of a polynomial with DFT it's sufficient to change $$$A(\omega_k)$$$ with $$$e^{A(\omega_k)}$$$ and do the inverse. It works the same way with this transform where you apply the function to ranked vectors as a polynomials and do the inverse.

Worth noting that a[0] should be 0.

Regarding your answer to Q1. Your definition of exponential does not work in a general ring, because we do not have a definition of convergence for the infinite series. On the other hand, it makes sense if the ring satisfies one of the following:

Since the subset-convolution is a nilpotent operation (you don't state it but, with your notation, it is fundamental that $$$a_0=0$$$ or that $$$0<i_1$$$, otherwise it is false) we fall in the second case.

By the way, after reading Elegia's comment, I spent some time implementing a library. It shall be rather well-written and decently documented, you can find it here.

You're right, I shouldn't have said that it works like this in any ring. But in our case I'd say that exponent is well defined as long as $$$e^{a_0}$$$ exists (I amended the formula to properly reflect this). Surely, in arbitrary ring we may state that $$$e^0=1$$$ so taking exponent from $$$A$$$ such that $$$A(0)=0$$$ is always possible under this specific operation.

By the way, if we compute exponent of formal series (as in generating functions), do we use any specific norm? Technically can do something like $$$|A(x)-B(x)|=\frac{1}{k+1}$$$ for $$$A(x)-B(x)=x^k C(x)$$$, right?

Composition of generating functions

Let $$$f(x)=\sum a_i x^i$$$, $$$g(x)=\sum b_i x^i$$$ be two formal power series. If $$$b_0=0$$$, we can give a meaning to the composition $$$f(g(x))$$$. Why? For a reason very similar to the "nilpotency" described above.

Given a formal power series $$$h(x)=\sum c_i x^i$$$, its order $$$o(g)$$$ is the smallest natural number $$$n\ge 0$$$ such that $$$c_n\not=0$$$. It is straight-forward to check that $$$o(h_1\cdot h_2) = o(h_1)+o(h_2)$$$. Hence, if $$$o(g)>0$$$, then $$$o(g^i)\ge i$$$ and thus we may take the following one as a definition for the composition (notice that the right-hand side contains finitely many terms):

Thus, if the constant coefficient is null we can always compute the exponential of the generating function.

What if the constant coefficient is not $$$0$$$

As you noticed, if $$$f=\exp$$$, we can compute $$$\exp(g)$$$ even if $$$g(0)\not=0$$$, provided that we can give a meaning to $$$\exp(g(0))$$$. This is a general fact.

Assume that $$$f$$$ has radius of convergence equal to $$$R>0$$$, that is $$$\sum_{i\ge 0} |a_i|r^i < \infty$$$ for all $$$r<R$$$ (here we need that the underlying ring/field of coefficients is a Banach space). Then we can define $$$f(g(x))$$$ provided that $$$|g(0)|<R$$$. How? With a simple translation trick. Let $$$\tilde f(x) = f(x+b_0)$$$ (recall that $$$g(0)=b_0$$$). One can show (do it) that $$$\tilde f(x)$$$ is well-defined. Then we define

which is possible since $$$g(x)-b_0$$$ has null constant coefficient.

Since the radius of convergence of $$$\exp$$$ is infinity, we can always define $$$\exp(g)$$$ if the ring/field of coefficients is a Banach space.

Finally answering your question

No, when computing the exponential of a formal power series we do not use any norm.

Btw just a small note. If switch to using the xor-transform it is possible to speed up your implementation of the subset transform by a factor of 2 basically for free.

The idea is that from knowing

(x>>1, popcount(x))you can figure out whatxis.In a similar way you can figure out

x ^ yfrom knowing((x ^ y) >> 1, popcount(x) + popcount(y)).Btw there are some really cool things on top of adamant's answer.

ln(1 + x)has a similar taylor expansion asexp(x), so once you haveexpimplemented, you gotlnfor free.lnandexpyou can do subset-convolution, sincesubset-convolution(f, g) = exp(ln(f) + ln(g)). This also means that you can calculate powers quickly.lnandexpto solve equations. Like say you knowfandg, andhis unknown, wheref = subset-convolution(g, h). Then you can solve forhbyh = exp(ln(f) - ln(g)).Technically yes, but it seems you can as well do all of this by using these functions directly on the ranked vectors (ln, product and division in your case), so why making it slower by repeated logs and exps?