On February 24th starting at 2 PM US Eastern Time, the 2023 ICPC North America South Regional* will take place. This year, I will host the official contest broadcast, starting shortly before the beginning of the contest, on the ICPC Live YouTube channel. During the stream, I'll

- Follow the scoreboard and discuss the contest

- Discuss the problems and possibly solve a few of them (though unlike some of my past personal ICPC streams, solving problems and competing for the highest score will not be the main goal of the stream)

- Around two hours into the contest, SecondThread and I will join each other's streams via Zoom to discuss the problems overlapping between the South Division and the Pacific Northwest regional.

- Talk about the teams competing to advance to the 2024 ICPC North America Championship

- In keeping with recent tradition, I'm hoping to share some fun facts about the competing teams. If you're the contestant or coach of a competing team and want to share a fun fact for me to read on stream while covering your team, please comment below or send me a DM (including your university and team name).

- Answer questions asked by viewers

- If you have a question you'd like me to answer on stream, feel free to comment below!

- And more!

The South Division comprises the Mid-Atlantic, South Central, and Southeast North America regions. Teams from all three regions will compete on the same problemset and scoreboard, and the top few teams from each region, as well as potentially some extra teams from the combined scoreboard, will advance to the North America Championship. Historically, these three regions have been highly competitive, so we should be in for an exciting regional!

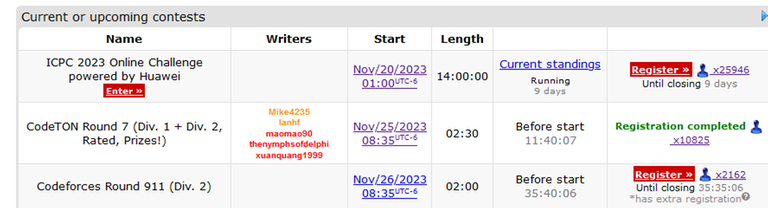

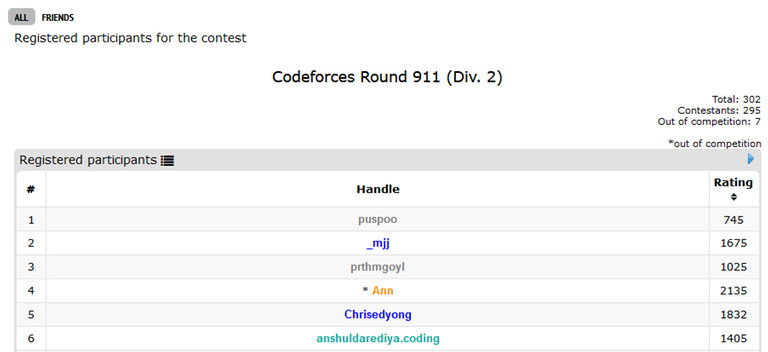

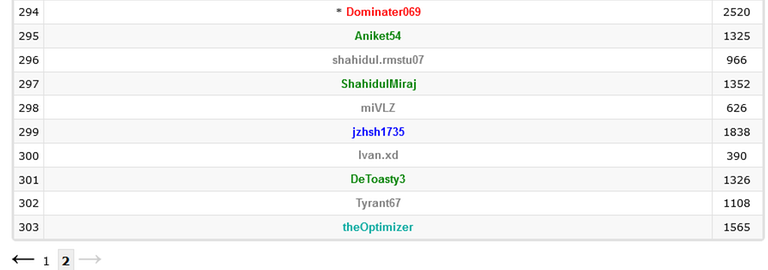

The contest website is at this link, and open mirrors for the two divisions will be held simultaneously with the contest: Division 1 and Division 2.

I hope to see you on the stream! Good luck to all of the participating teams!

*: Not a typo; due to ongoing schedule difficulties resulting from COVID, the 2023 regional is being held in 2024.