Hello everyone.

The idea of dynamic problem scores is not new. As far as I can remember, something that was discussed back in 2000. Since then, the issue has surfaced several times — one of the latest experiments have been organized by Alex_KPR.

Why do we need different problem scores? As correctly observed RAD, they are actually needed for contests where the solution has a chance to fail after coding phase. For example, Bob solved easy A, and Peter solved easy A and really hard B. But Peter's A failed system tests and he solved B later (and may not from the first attempt). In this case, if the problems were equal in value, Peter will take place below Bob, that somehow unfair.

Typically Codeforces problems has divible by 500 values: 500, 1000, 1500, etc. Usually we try to pick up a 5-problems sets, varying in complexity. In this case, each contestant will find interesting problems (for him/her). The traditional set of tasks includes problems 500-1000-1500-2000-2500.

In practice, it's not that simple. Sometimes it turns out that the authors and testers did not guess the complexity of the problem in terms of regular contestants. What to do?

The experiment is as follows. Assign the value of the task dynamically depending on the number who have solved this problem. During the main contest time here will be taken into account the submissions with verdict "Pretests passed", but after system test — "Accepted". Of course, solutions of out-of-competition participants will not be counted.

The idea is as follows:

- If a problem solved by half to all the contestants, then it is worth 500 points,

- If a problem solved by quater to half of all the contestants, then it is worth 1000 points,

- And so on.

More formally, in the following table:

| Solvers fraction | Max. problem points |

|---|---|

| (1/2, 1] | 500 |

| (1/4, 1/2] | 1000 |

| (1/8, 1/4] | 1500 |

| (1/16, 1/8] | 2000 |

| (1/32, 1/16] | 2500 |

| [0, 1/32] | 3000 (maximum problem score) |

Number of round participants = who made at least one attempt in this round.

That's it! As you can see the idea is very simple and not overloaded with formulas and details. You may view results of past Codeforces contests by links like "Final standings". We temporary recalculated results according experimental rules. Later we will return them back.

Today 2021-2022 ICPC, NERC, Northern Eurasia Onsite (Unrated, Online Mirror, ICPC Rules, Teams Preferred) will use such experimental rules. We guarantee that the problem A will be easiest problem (as Jury thinks), but the order of the remaining problems — random. Good luck!

Smoother dynamic problem scores (250 pts steps)

Smoother realization of dynamic problem scores with a step of 250 points has been implemented. As before, if the amount of contestants who solved a problem is increased twice, max. problem score is decreased by 500 points. Now also if the amount of successful contestants is increased  times, score is decreased by 250 points. The table below illustrates max. problem score dependency from the percentage of contestants who solved the problem.

times, score is decreased by 250 points. The table below illustrates max. problem score dependency from the percentage of contestants who solved the problem.

| Solvers fraction | Max. problem points |

|---|---|

| (0.707, 1] | 250 |

| (0.500, 0.707] | 500 |

| (0.353, 0.500] | 750 |

| (0.250, 0.353] | 1000 |

| (0.176, 0.250] | 1250 |

| (0.125, 0.176] | 1500 |

| (0.088, 0.125] | 1750 |

| (0.062, 0.088] | 2000 |

| (0.044, 0.062] | 2250 |

| (0.031, 0.044] | 2500 |

| (0.022, 0.031] | 2750 |

| [0, 0.022] | 3000 |

May be more dynamism can be added. Suppose 100 people participate and A is solved by 50 people, then it gets 1000 point, and if it is solved by 51 people then it gets 500 point. So, a sharp boundary may be avoided. Just a thought.

Agree.May be some formula can be thought of to calculate the value of a problem just as codechef long contest does linkYour text to link here....

How will the decreasing scores work? How many points per minute will be deducted if we don't know the original scores? Or are scores just calculated at the end of the contest?

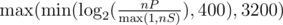

An interesting formula to consider would be , for nP the number of people who solve at least 1, nS the number of people who solve that problem. This gives a minimum and maximum bound of 400 and 3200 (those aren't fixed), while still making this dynamic.

, for nP the number of people who solve at least 1, nS the number of people who solve that problem. This gives a minimum and maximum bound of 400 and 3200 (those aren't fixed), while still making this dynamic.

My vote goes to min(3000,400*(1+lg2(nR/nS))) where nR is the number of rated contestants and nS is the number who correctly solve the problem. We may round to the nearest 50 or 100 to make the numbers look "nice", but rounding to 500s creates too much discontinuity.

As usual X / 250 per minute, where X is the problem max. score.

In this way, maybe your rank during the contest will change sharply several times =)

Interesting idea, I'm really excited.

That's an interesting idea. I also think that computing the scores without discontinuities would be better. Another thing you could provide to help us decide on a strategy is the fraction of people that solved each problem in each of the past rounds.

I think the overall result of round #117 was positive. Except that problem B and D got the same score (3500) whilst one has 15 solutions and the other 86 solutions (More or less, another issue is the lack of stats specific to the contest rather than overall). I think there is a world of difference between those amounts. A better algorithm will assign around 500 points more to the harder of them, I think.

Indeed, you already count out-of-competition contestants. However, I totally agree that there should be a formula to make the discrepancy between problems' points "smoother".

How about assigning 500 points to the most solved problem, 1000 to the next one, 1500 to the next one ... 2500 to the least solved problem? In case of a tie you can assign the same score to tied problems.

If one problem was solved by x contestants and another one was solved by x + 1 contestants it will be totally unfair to give this problems different score.

But now such x exists anyway. It would be much better to make some continuous score function from number of persons solved.

Do you think the problems solved by 1000 and 1001 participant should have costs 500 and 1000?

I wanted to solve the problem described by this article (the problem makers cannot always sort problems by difficulty) and the problem described by vexorian (a problem solved by 86 people, another by 15 people and both with the same score) with the simplest algorithm. It is not the best one (a continous function would be best), but it is better than the method described in this article.

Problem A wasn't the easiest one!!! :-(

For the sake of continuity, I suggest the score for a question to be shared by all those who solved the problem. This way, the initial problem score could be ceil( 500 * numberOfUsers) / UserShare.

The share could be relative to the time taken by user to solve in comparisson to the sum of time taken by all users who solved during the contest time. The faster you do it, the more you get from the maximum score.

Although I have to admit, thinking of the details make it seem more complicated than necessary.

I don't think it's good formula. 1. Problem soved by 2 users is not really two times easer, then problem solved by 2 users. 2. It hould be scaled more close to existing.

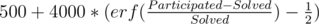

I think something like . It's not very good now, because if half of users solved problem it will cost about 1800. But i think that it's question of scaling value under erf.

. It's not very good now, because if half of users solved problem it will cost about 1800. But i think that it's question of scaling value under erf.

"Typically Codeforces problems has divible by 500 values"

Can anyone explain why all this is written 3 years ago but I see it in "Recent actions" and see it first-time now?

The post was updated