Hello!

I posted a tutorial on the Divide and Conquer Dynamic Programming Optimisation on my YouTube channel Algorithms Conquered. I cover the problem Subarray Squares from the CSES Advanced Techniques problem set. Check it out if you're interested!

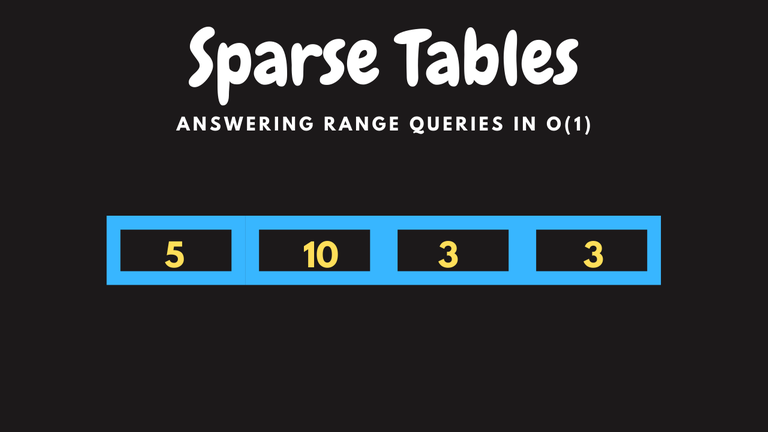

I also have a tutorial on the Convex Hull Trick dp optimisation (surprisingly the most viewed and longest watched tutorial on my channel) and on other concepts like Persistent Segment Trees, Sparse Tables, Mo's Algorithm and Parallel Binary Search.