437A - The Child and Homework

We enumerate each choice i, and then enumerate another choice j (j ≠ i), let cnt = 0 at first, if choice j is twice longer than i let cnt = cnt + 1, if choice j is twice shorter than i let cnt = cnt - 1. So i is great if and only if cnt = 3 or cnt = - 3.

If there is exactly one great choice, output it, otherwise output C.

437B - The Child and Set

We could deal with this by digits.

Because lowbit(x) is taking out the lowest 1 of the number x, we can enumerate the number of the lowest zero.

Then, if we enumerate x as the number of zero, we enumerate a as well, which a × 2x is no more than limit and a is odd. We can find out that lowbit(a × 2x) = 2x.

In this order, we would find out that the lowbit() we are considering is monotonically decresing.

Because for every two number x, y, lowbit(x) is a divisor of lowbit(y) or lowbit(y) is a divisor of lowbit(x).

We can solve it by greedy. When we enumerate x by descending order, we check whether 2x is no more than sum, and check whether there is such a. We minus 2x from sum if x and a exist.

If at last sum is not equal to 0, then it must be an impossible test.

Why? Because if we don't choose a number whose lowbit = 2x, then we shouldn't choose two numbers whose lowbit = 2x - 1. (Otherwise we can replace these two numbers with one number)

If we choose one number whose lowbit = 2x - 1, then we can choose at most one number whose lowbit = 2x - 2, at most one number whose lowbit = 2x - 3 and so on. So the total sum of them is less than 2x and we can't merge them into sum.

If we don't choose one number whose lowbit = 2x - 1, then it's just the same as we don't choose one number whose lowbit = 2x.

So the total time complexity is O(limit).

437C - The Child and Toy

The best way to delete all n nodes is deleting them in decreasing order of their value.

Proof:

Consider each edge (x, y), it will contribute to the total cost vx or vy when it is deleted.

If we delete the vertices in decreasing order, then it will contribute only min(vx, vy), so the total costs is the lowest.

437D - The Child and Zoo

First, there is nothing in the graph. We sort all the areas of the original graph by their animal numbers in decreasing order, and then add them one by one.

When we add area i, we add all the roads (i, j), where j is some area that has been added.

After doing so, we have merged some connected components. If p and q are two areas in different connected components we have merged just then, f(p, q) must equals the vi, because they are not connected until we add node i.

So we use Union-Find Set to do such procedure, and maintain the size of each connected component, then we can calculate the answer easily.

437E - The Child and Polygon

In this problem, you are asked to count the triangulations of a simple polygon.

First we label the vertex of polygon from 0 to n - 1.

Then we let f[i][j] be the number of triangulations from vertex i to vertex j. (Suppose there is no other vertices and there is an edge between i and j)

If the line segment (i, j) cross with the original polygon or is outside the polygon, f[i][j] is just 0. We can check it in O(n) time.

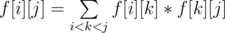

Otherwise, we have  , which means we split the polygon into the triangulation from vertex i to vertex k, a triangle (i, k, j) and the triangulation from vertex k to vertex j. We can sum these terms in O(n) time.

, which means we split the polygon into the triangulation from vertex i to vertex k, a triangle (i, k, j) and the triangulation from vertex k to vertex j. We can sum these terms in O(n) time.

Finally,the answer is f[0][n - 1]. It's obvious that we didn't miss some triangulation. And we use a triangle to split the polygon each time, so if the triangle is different then the triangulation must be different, too. So we didn't count some triangulation more than once.

So the total time complexity is O(n3), which is sufficient for this problem.

438D - The Child and Sequence

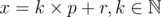

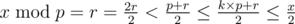

The important idea of this problem is the property of  .

.

Let  .

.

So,  .

.

If k = 0,  remains to be x.

remains to be x.

If k ≠ 0,  .

.

We realize every time a change happening on x, x will be reduced by at least a half.

So let the energy of x become  . Every time when we modify x, it may take at least 1 energy.

. Every time when we modify x, it may take at least 1 energy.

The initial energy of the sequence is  .

.

We use a segment tree to support the query to the maximum among an interval. When we need to deal with the operation 2, we modify the maximum of the segment until it is less than x.

Now let's face with the operation 3.

Every time we modify an element on the segment tree, we'll charge a element with  power.

power.

So the total time complexity is :  .

.

By the way, we can extend the operation 3 to assign all the elements in the interval to the same number in the same time complexity. This is an interesting idea also, but a bit harder. You can think of it.

438E - The Child and Binary Tree

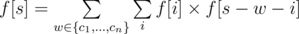

Let f[s] be the number of good vertex-weighted rooted binary trees whose weight exactly equal to s, then we have:

f[0] = 1

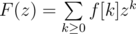

Let F(z) be the generating function of f. That is,

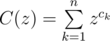

And then let

So we have:

F(z) = C(z)F(z)2 + 1

`` + 1'' is for f[0] = 1.

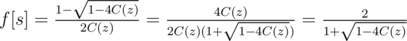

Solve this equation we have:

So the remaining question is: how to calculate the multiplication inverse of a power series and the square root of a power series?

There is an interesting algorithm which calculate the inverse of a power series F(z):

We use f(z) ≡ g(z) (mod zn) to denote that the first n terms of f(z) and g(z) are the same.

We can simply calculate a formal power series R1(z) which satisfies R1(z)F(z) ≡ 1 (mod z1)

Next, if we have Rn(z) which satisfies Rn(z)F(z) ≡ 1 (mod zn), we will get:

(Rn(z)F(z) - 1)2 ≡ 0 (mod z2n)

Rn(z)2F(z)2 - 2Rn(z)F(z) + 1 ≡ 0 (mod z2n)

1 ≡ 2Rn(z)F(z) - Rn(z)2F(z)2 (mod z2n)

R2n(z) ≡ 2Rn(z) - Rn(z)2F(z) (mod z2n)

We can simplely use Fast Fourier Transform to deal with multiplication. Note the unusual mod 998244353 (7 × 17 × 223 + 1), thus we can use Number Theoretic Transform.

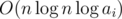

By doubling n repeatedly, we can get the first n terms of the inverse of F(z) in  time. It's because that

time. It's because that

We can just use the idea of this algorithm to calculate the square root of a power series F(z):

We can simply calculate a power series S1(z) which satisfies S1(z)2 ≡ F(z) (mod z2n)

Next, if we have Sn(z) which satisfies Sn(z)2 ≡ F(z) (mod zn), we will get:

(Sn(z)2 - F(z))2 ≡ 0 (mod z2n)

Sn(z)4 - 2Sn(z)2F(z) + F(z)2 ≡ 0 (mod z2n)

Sn(z)2 - 2F(z) + F(z)2Sn(z) - 2 ≡ 0 (mod z2n)

4F(z) ≡ Sn(z)2 + 2F(z) + F(z)2Sn(z) - 2 (mod z2n)

4F(z) ≡ (Sn(z) + F(z)Sn(z) - 1)2 (mod z2n)

So,

By doubling n repeatedly, we can get the first n terms of the square root of F(z) in  time.

time.

That's all. What I want to share with others is this beautiful doubling algorithm.

So the total time complexity of the solution to the original problem is  .

.

There is an algorithm solving this problem using divide and conquer and Fast Fourier Transform, which runs in  . See the C++ code and the Java code for details.

. See the C++ code and the Java code for details.