/***

* _____ ___ ___ ___ ___ __ ___ _ _

* |_ _| / __| / _ \ / __| |_ ) / \ |_ ) | | | O O o

* | | \__ \ | (_) || (__ / / | () | / / |_ _| o

* _|_|_ |___/ \___/ \___| _____ /___| _\__/ /___| _|_|_ [O]__ZT

* _|"""""_|"""""_|"""""_|"""""_| _|"""""_|"""""_|"""""_|"""""_|======}

* "`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`000--o\.

* ___ ___ ___ ___ _ ___ ___ ___ _ ___

* o O O / \ | _ \ | _ \ |_ _| | | | __| / _ \ / _ \ | | / __|

* o | - | | _/ | / | | | |__ | _| | (_) || (_) | | |__ \__ \

* TS__[O] |_|_| _|_|_ |_|_\ |___| |____| _____ _|_|_ \___/ \___/ |____| |___/

* {======_|"""""_| """ _|"""""_|"""""_|"""""_| _| """ _|"""""_|"""""_|"""""_|"""""|

* ./o--000"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-"`-0-0-'

*/

Hello everyone!

We're very happy to announce and invite you to TLX Special Open Contest (TSOC) — April Fools 2024!

Having a break from the past 3 years, and here I am, a sudden comeback on the April Fools' contest 🤡

We strongly encourage you to read all the problems!

Go Go Go Go

- Contest link: TLX Special Open Contest (TSOC) — April Fools 2024

- Time: 2nd April, 2024, 20:35 UTC+7 (13.35 UTC)

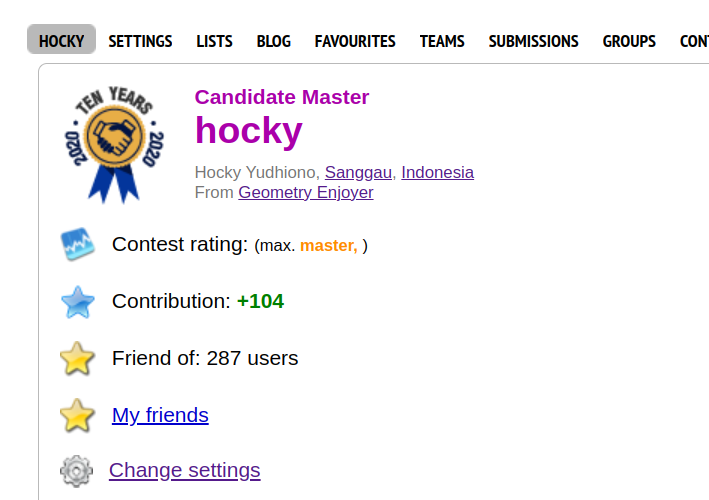

- Writers: hocky x Pyqe

- Duration: 2 hours 30 minutes

- Problems: 12 problems

P.S: We would like to thank fushar a lot lot lot as an organizer who worked hard to prepare the TLX platform <3~!

UPD: Contest is over!

Congratulations to the top 5:

Congratulations to our first solvers:

A: fonmagnus

C: CDuongg

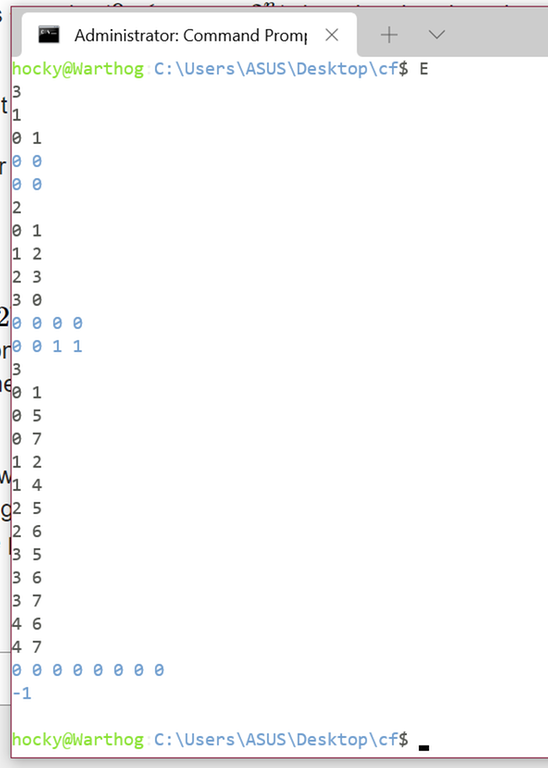

E: no one 😭😭😭😭

F: CDuongg

G: Golovanov399

H: Ackhava

I: Maskrio

J: natsugiri

K: yellowtoad

Problems I like:

Problems I hate the most:

Editorial is available in the contest!

Thank you for participating!

You can now upsolve the problems here.